大家好,我是Edison。

今天是中秋节前最后一个工作日,加油挺住,马上就放假了!

近期我一直在学习和了解LLM的相关知识,听到大家都在谈论AI Agent,说它是接下来几年大模型应用开发的新范式,那么什么是AI Agent,如何快速开发一个AI Agent呢?

AI Agent:可以帮你执行任务的助手

学术界和工业界对术语“AI Agent”提出了各种定义。其中,OpenAI将AI Agent定义为“以大语言模型为大脑驱动的系统,具备自主理解、感知、规划、记忆和使用工具的能力,能够自动化执行完成复杂任务的系统。”说人话就是:大多数时候你给它一个最终你想要达成的目标,它能直接交付结果,过程你啥都不用管。 NOTE:如果说人和动物的区别是人会使用各种工具,那么Agent和大模型的区别亦然。我们可以把Agent与LLM形象地比作生物体与其大脑,Agent有手有脚,可以自己干活自己执行,而LLM呢,就是它的大脑。比如,如果你使用LLM大模型,它可能只能给你输出一份食谱,告诉你需要哪些食材和步骤来制作。但如果你使用Agent,它可能就是不仅提供食谱和步骤,还会根据你的需求,帮你选择合适的食材甚至自动下单购买,监控烹饪过程,确保食物口感,最终为你呈上一份佳肴。

NOTE:如果说人和动物的区别是人会使用各种工具,那么Agent和大模型的区别亦然。我们可以把Agent与LLM形象地比作生物体与其大脑,Agent有手有脚,可以自己干活自己执行,而LLM呢,就是它的大脑。比如,如果你使用LLM大模型,它可能只能给你输出一份食谱,告诉你需要哪些食材和步骤来制作。但如果你使用Agent,它可能就是不仅提供食谱和步骤,还会根据你的需求,帮你选择合适的食材甚至自动下单购买,监控烹饪过程,确保食物口感,最终为你呈上一份佳肴。AI Agent如何工作?

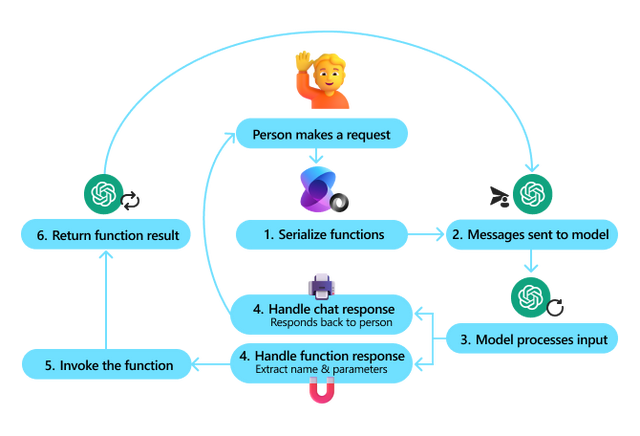

AI Agent的架构是其智能行为的基础,它通常包括感知、规划、记忆、工具使用和行动等关键组件,这些组件协同工作以实现高效的智能行为。

AI Agent的工作流程其实就是一个连续的循环过程。

它从感知环境开始,经过信息处理、规划和决策,然后执行行动。最后,根据执行结果和环境反馈进行调整,以优化未来的行动和决策。

通过这种结构化和层次化的方式,AI Agent能够有效地处理信息,做出决策,并在复杂环境中执行任务。

如何开发AI Agent?

目前业界开发AI Agent主要有两种模式:

一种是基于Python或C#等编程语言,结合LangChain或Semantic Kernel等大模型应用开发框架,集成某个大模型API 和 企业内部的业务API能力,来完成具体领域的Agent。

另一种是基于Coze、Dify、AutoGen等Agent开发管理平台,拖过拖拉拽的方式快速生成一个Agent,与其说是开发,不如说是Workflow一样的配置。当然,也需要给这些平台注册封装好的企业内部API平台提供的能力供配置好的Agent去实现工具调用。

使用Semantic Kernel开发AI Agent

这里我们快速使用Semantic Kernel开发一个简易的WorkOrder Agent(MES工单助手),重点关注如何给LLM添加Function Calling能力,直观了解Agent规划任务 和 执行任务 的效果,而至于其他更加具体的,等待后续了解深入后再交流,这里我们就先来个感性认识即可。

以终为始,先看效果吧:

(1)没有实现Function Calling的效果,它就只是个Chatbot

(2)实现了Function Calling的效果,它就可以称为Agent

可以看到,我的需求其实包含两个步骤:第一步是更新工单的Quantity,第二步是查询更新后的工单信息。而这两个步骤我们假设其实都是需要去调用MES WorkOrderService API才能获得的,而这就需要我们给LLM加入Function Calling的能力,当然LLM自己得知道如何规划执行的步骤,哪个步骤先执行,哪个后执行。

示例代码的结构如下所示:

关键部分代码:

(1)Shared

OpenAiConfiguration.cs

public OpenAiConfiguration{ public string Provider { get; set; } public string ModelId { get; set; } public string EndPoint { get; set; } public string ApiKey { get; set; } public OpenAiConfiguration(string modelId, string endPoint, string apiKey) { Provider = ConfigConstants.LLMProviders.OpenAI; // Default OpenAI-Compatible LLM API Provider ModelId = modelId; EndPoint = endPoint; ApiKey = apiKey; } public OpenAiConfiguration(string provider, string modelId, string endPoint, string apiKey) { Provider = provider; ModelId = modelId; EndPoint = endPoint; ApiKey = apiKey; }}CustomLLMApiHandler.cs

public CustomLLMApiHandler : HttpClientHandler{ private readonly string _openAiProvider; private readonly string _openAiBaseAddress; public CustomLLMApiHandler(string openAiProvider, string openAiBaseAddress) { _openAiProvider = openAiProvider; _openAiBaseAddress = openAiBaseAddress; } protected override async Task<HttpResponseMessage> SendAsync( HttpRequestMessage request, CancellationToken cancellationToken) { UriBuilder uriBuilder; Uri uri = new Uri(_openAiBaseAddress); switch (request.RequestUri?.LocalPath) { case "/v1/chat/completions": switch (_openAiProvider) { case ConfigConstants.LLMProviders.ZhiPuAI: uriBuilder = new UriBuilder(request.RequestUri) { Scheme = "https", Host = uri.Host, Path = ConfigConstants.LLMApiPaths.ZhiPuAIChatCompletions, }; request.RequestUri = uriBuilder.Uri; break; default: // Default: OpenAI-Compatible API Providers uriBuilder = new UriBuilder(request.RequestUri) { Scheme = "https", Host = uri.Host, Path = ConfigConstants.LLMApiPaths.OpenAIChatCompletions, }; request.RequestUri = uriBuilder.Uri; break; } break; } HttpResponseMessage response = await base.SendAsync(request, cancellationToken); return response; }}(2)WorkOrderService

这里我直接模拟的API的逻辑,你可以使用HttpClient去实际调用某个API。

public WorkOrderService{ private static List<WorkOrder> workOrders = new List<WorkOrder> { new WorkOrder { WorkOrderName = "9050100", ProductName = "A5E900100", ProductVersion = "001 / AB", Quantity = 100, Status = "Ready" }, new WorkOrder { WorkOrderName = "9050101", ProductName = "A5E900101", ProductVersion = "001 / AB", Quantity = 200, Status = "Ready" }, new WorkOrder { WorkOrderName = "9050102", ProductName = "A5E900102", ProductVersion = "001 / AB", Quantity = 300, Status = "InProcess" }, new WorkOrder { WorkOrderName = "9050103", ProductName = "A5E900103", ProductVersion = "001 / AB", Quantity = 400, Status = "InProcess" }, new WorkOrder { WorkOrderName = "9050104", ProductName = "A5E900104", ProductVersion = "001 / AB", Quantity = 500, Status = "Completed" } }; public WorkOrder GetWorkOrderInfo(string orderName) { return workOrders.Find(o => o.WorkOrderName == orderName); } public string UpdateWorkOrderStatus(string orderName, string newStatus) { var workOrder = this.GetWorkOrderInfo(orderName); if (workOrder == ) return "Operate Failed : The work order is not existing!"; // Update status if it is valid workOrder.Status = newStatus; return "Operate Succeed!"; } public string ReduceWorkOrderQuantity(string orderName, int newQuantity) { var workOrder = this.GetWorkOrderInfo(orderName); if (workOrder == ) return "Operate Failed : The work order is not existing!"; // Some business checking logic like this if (workOrder.Status == "Completed") return "Operate Failed : The work order is completed, can not be reduced!"; if (newQuantity <= 1 || newQuantity >= workOrder.Quantity) return "Operate Failed : The new quantity is invalid!"; // Update quantity if it is valid workOrder.Quantity = newQuantity; return "Operate Succeed!"; }}(3)Form

appsetting.json

{ "LLM_API_PROVIDER": "ZhiPuAI", "LLM_API_MODEL": "glm-4", "LLM_API_BASE_URL": "https://open.bigmodel.cn", "LLM_API_KEY": "***********" // Update this value to yours}AgentForm.cs

public partial ChatForm : Form{ private Kernel _kernel = ; private OpenAIPromptExecutionSettings _settings = ; private IChatCompletionService _chatCompletion = ; private ChatHistory _chatHistory = ; public ChatForm() { InitializeComponent(); } private void ChatForm_Load(object sender, EventArgs e) { var configuration = new ConfigurationBuilder().AddJsonFile($"appsettings.ZhiPu.json"); var config = configuration.Build(); var openAiConfiguration = new OpenAiConfiguration( config.GetSection("LLM_API_PROVIDER").Value, config.GetSection("LLM_API_MODEL").Value, config.GetSection("LLM_API_BASE_URL").Value, config.GetSection("LLM_API_KEY").Value); var openAiClient = new HttpClient(new CustomLlmApiHandler(openAiConfiguration.Provider, openAiConfiguration.EndPoint)); _kernel = Kernel.CreateBuilder() .AddOpenAIChatCompletion(openAiConfiguration.ModelId, openAiConfiguration.ApiKey, httpClient: openAiClient) .Build(); _chatCompletion = _kernel.GetRequiredService<IChatCompletionService>(); _chatHistory = new ChatHistory(); _chatHistory.AddSystemMessage("You are one WorkOrder Assistant."); } /// <summary> /// Import Custom Plugin/Functions to Kernel /// </summary> private void cbxUseFunctionCalling_CheckedChanged(object sender, EventArgs e) { if (cbxUseFunctionCalling.Checked) { _kernel.Plugins.Add(KernelPluginFactory.CreateFromFunctions("WorkOrderHelperPlugin", new List<KernelFunction> { _kernel.CreateFunctionFromMethod((string orderName) => { var workOrderRepository = new WorkOrderService(); return workOrderRepository.GetWorkOrderInfo(orderName); }, "GetWorkOrderInfo", "Get WorkOrder's Detail Information"), _kernel.CreateFunctionFromMethod((string orderName, int newQuantity) => { var workOrderRepository = new WorkOrderService(); return workOrderRepository.ReduceWorkOrderQuantity(orderName, newQuantity); }, "ReduceWorkOrderQuantity", "Reduce WorkOrder's Quantity to new Quantity"), _kernel.CreateFunctionFromMethod((string orderName, string newStatus) => { var workOrderRepository = new WorkOrderService(); return workOrderRepository.UpdateWorkOrderStatus(orderName, newStatus); }, "UpdateWorkOrderStatus", "Update WorkOrder's Status to new Status") } )); _settings = new OpenAIPromptExecutionSettings { ToolCallBehavior = ToolCallBehavior.AutoInvokeKernelFunctions }; lblTitle.Text = "WorkOrder Agent"; } else { _kernel.Plugins.Clear(); _settings = ; lblTitle.Text = "AI Chatbot"; } } /// <summary> /// Send the prompt to AI Model and get the response /// </summary> private void btnSendPrompt_Click(object sender, EventArgs e) { if (string.IsOrWhiteSpace(tbxPrompt.Text)) { MessageBox.Show("Please input a prompt before sending!", "Warning"); return; } _chatHistory.AddUserMessage(tbxPrompt.Text); ChatMessageContent chatResponse = ; tbxResponse.Clear(); if (cbxUseFunctionCalling.Checked) { Task.Run(() => { ShowProcessMessage("AI is handling your request now..."); chatResponse = _chatCompletion.GetChatMessageContentAsync(_chatHistory, _settings, _kernel) .GetAwaiter() .GetResult(); UpdateResponseContent(chatResponse.ToString()); ShowProcessMessage("AI Response:"); }); } else { Task.Run(() => { ShowProcessMessage("AI is handling your request now..."); chatResponse = _chatCompletion.GetChatMessageContentAsync(_chatHistory, , _kernel) .GetAwaiter() .GetResult(); UpdateResponseContent(chatResponse.ToString()); ShowProcessMessage("AI Response:"); }); } }}在Semantic Kernel中提供另一个OpenAIPromptExecutionSettings,我们将其设置为AutoInvokeKernelFunctions,它就会告诉LLM自己判断是否需要调用Functions 以及 以什么顺序调用这些Functions,当然这些Functions需要提前注册给LLM:

_settings = new OpenAIPromptExecutionSettings{ ToolCallBehavior = ToolCallBehavior.AutoInvokeKernelFunctions};小结本文简单介绍了AI Agent的基本概念 和 工作方式,目前主要有两种开发Agent的模式,一种是高代码手搓,另一种是低代码拖拉拽。最后,本文通过C# + Semantic Kernel + 智谱LLM模型 演示了如何快速开发一个Agent,虽然它只是个Demo,但希望对你了解Agent有所帮助!示例源码本文示例:https://github.com/Coder-EdisonZhou/EDT.WorkOrderAgent本文大模型:智谱 GLM-4 模型推荐学习Microsoft Learn, 《Semantic Kernel 学习之路》,点击本文查看原文按钮即可直达:https://learn.microsoft.com/zh-cn/dotnet/ai/semantic-kernel-dotnet-overview?wt.mc_id=MVP_397012年终总结:数字化转型:我在传统企业做数字化转型C#刷算法题:C#刷剑指Offer算法题系列文章目录C#刷设计模式:C#刷23种设计模式系列文章目录.NET面试:.NET开发面试知识体系.NET大会:2020年中国.NET开发者大会PDF资料